top of page

Biostatistics & Epidemiology

Selection, information, and recall bias

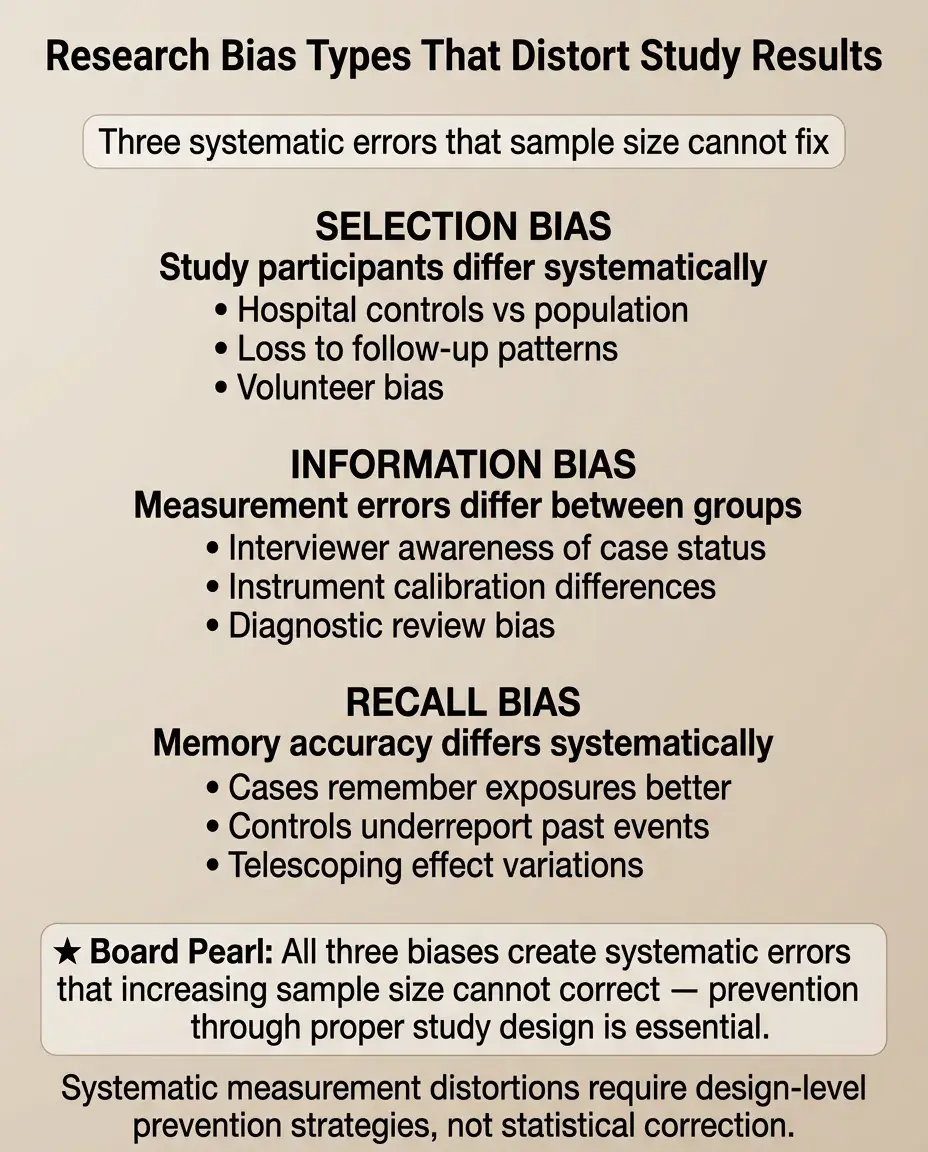

Core Principle of Bias in Medical Research

🧷

Bias is systematic error in study design, data collection, or analysis that leads to incorrect estimates of association between exposure and outcome.

🧷

Unlike random error (which decreases with larger sample size), bias is systematic — it consistently pushes results in one direction and cannot be fixed by increasing n.

🧷

The three most testable biases are selection bias (who gets into the study), information bias (how data is collected), and recall bias (differential memory of exposures).

🧷

Board pearl: Bias threatens internal validity — whether the study accurately measures what it claims to measure within its own population.

Selection Bias: The Problem of Non-Representative Samples

📍

Selection bias occurs when the association between exposure and outcome differs between those who participate in the study and those who do not.

📍

This bias arises during participant recruitment and retention, creating a study sample that systematically differs from the target population.

📍

Common scenarios: loss to follow-up, volunteer bias, healthy worker effect, Berkson's bias (hospital-based studies), and survival bias.

📍

Board distinction: Selection bias affects external validity (generalizability) but more critically threatens internal validity by creating spurious associations.

Mechanisms of Selection Bias

🔹

Selection bias requires that participation is related to both exposure and outcome — creating a backdoor path that distorts the true association.

🔹

Example: studying coffee consumption and lung cancer at a respiratory clinic — smokers (who drink more coffee) are overrepresented among lung patients.

🔹

The bias can create associations where none exist, mask true associations, or even reverse the direction of effect.

🔹

Board pearl: In case-control studies, selection bias often occurs when controls are chosen from a different source population than cases.

Loss to Follow-up as Selection Bias

⭐

Differential loss to follow-up is the most common source of selection bias in cohort studies and clinical trials.

⭐

If sicker patients drop out more frequently from the treatment group, the treatment will appear more effective than it truly is.

⭐

Example: a weight loss drug causes nausea → participants who experience side effects drop out → only successful responders remain → overestimated efficacy.

⭐

Prevention: intention-to-treat analysis includes all randomized participants regardless of adherence or completion.

Classic Selection Bias Examples

✅

Healthy worker effect: employed populations appear healthier than the general population because severely ill people cannot work.

✅

Berkson's bias: hospital-based studies show spurious associations because admission rates differ by disease combination.

✅

Volunteer bias: people who volunteer for studies are systematically different (healthier, more educated, more adherent) than non-volunteers.

✅

Survival bias: studying prevalent cases misses those who died quickly, biasing toward less aggressive disease.

✅

Board clue: "Hospital-based case-control study" → think Berkson's bias.

Information Bias: Systematic Measurement Error

🧠

Information bias (measurement bias) occurs when data on exposure, outcome, or confounders is collected differently between comparison groups.

🧠

This creates systematic misclassification — exposure or outcome status is incorrectly assigned in a non-random pattern.

🧠

Sources include faulty measurement instruments, observer bias, interviewer bias, and differential surveillance between groups.

🧠

Key principle: The bias depends on whether misclassification is differential (differs by group) or non-differential (equal across groups).

Non-Differential vs Differential Misclassification

⚡

Non-differential misclassification: measurement error is equal across all groups → typically biases results toward the null (underestimates true associations).

⚡

Differential misclassification: measurement error differs between groups → can bias results in either direction, potentially creating spurious associations.

⚡

Example: using self-reported smoking status (non-differential) vs measuring smoking only in lung cancer patients more thoroughly (differential).

⚡

Board pearl: Non-differential misclassification makes it harder to detect true associations but doesn't create false ones.

Observer and Detection Bias

📌

Observer bias: researchers' knowledge of participant status influences how they measure or interpret outcomes.

📌

Detection (surveillance) bias: one group receives more frequent or intensive monitoring, leading to higher detection rates of the outcome.

📌

Example: patients on a new drug get monthly liver enzyme checks while controls get yearly checks → more "hepatotoxicity" detected in the treatment group.

📌

Prevention: blinding of outcome assessors and standardized surveillance protocols for all groups.

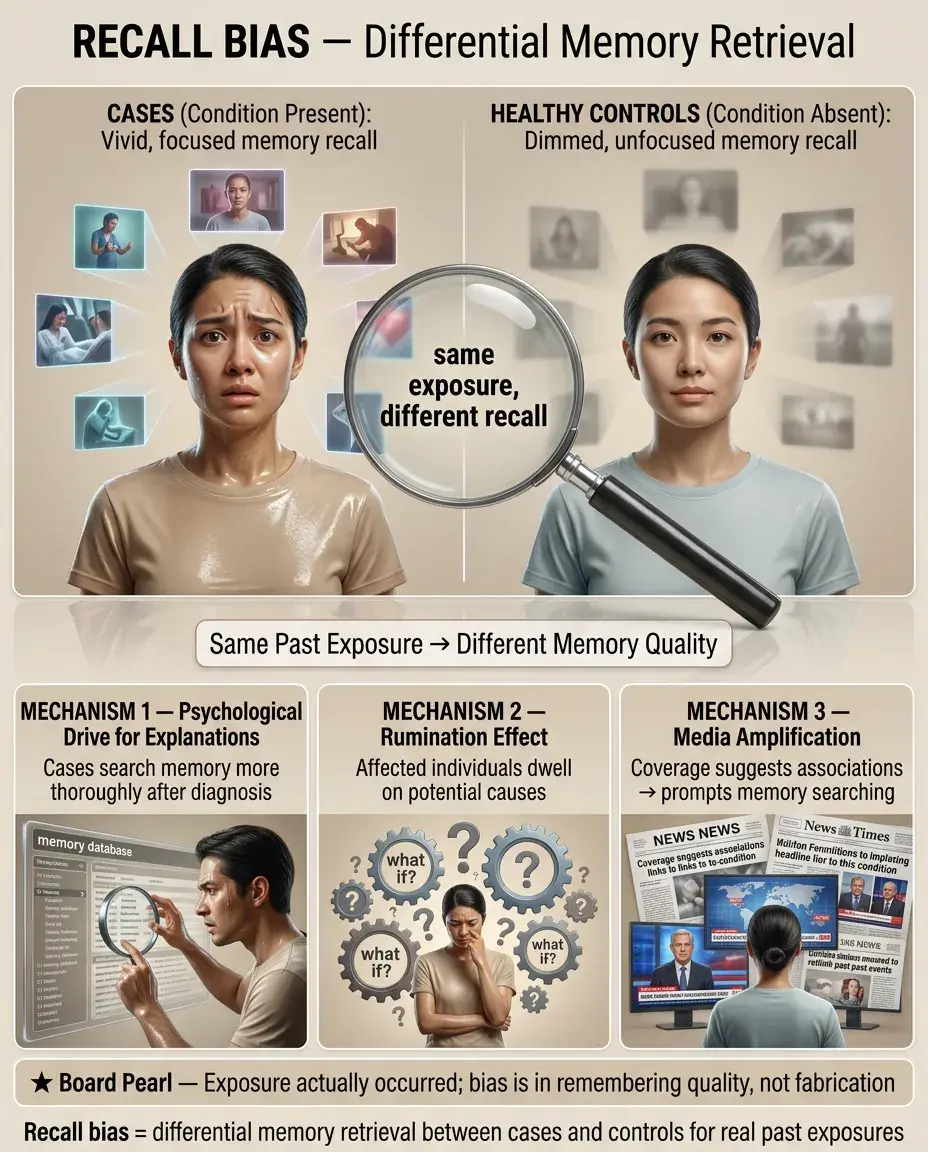

Recall Bias: The Memory Problem

📣

Recall bias occurs when participants with disease remember or report past exposures differently than those without disease.

📣

Classic scenario: mothers of babies with birth defects scrutinize their pregnancy more carefully, "recalling" more medication use than mothers of healthy babies.

📣

This differential recall creates spurious associations between exposures and outcomes that may not truly exist.

📣

Board pearl: Recall bias is primarily a threat in case-control studies where exposure is assessed retrospectively after outcome has occurred.

Mechanisms and Examples of Recall Bias

🔸

The psychological drive to find explanations for adverse outcomes leads to more thorough searching of memory in affected individuals.

🔸

Cases may ruminate on potential causes, leading to better recall of minor exposures that controls forget.

🔸

Example: patients with lung cancer recall more detailed occupational exposures than healthy controls who never thought about workplace chemicals.

🔸

Media coverage can worsen recall bias by suggesting associations that prompt differential memory searching.

🔸

Key point: The exposure truly occurred — the bias is in the differential remembering, not fabrication.

Preventing Selection Bias

🧷

In cohort studies: minimize loss to follow-up through frequent contact, incentives, and flexible scheduling.

🧷

In case-control studies: select controls from the same source population that gave rise to the cases.

🧷

In RCTs: use intention-to-treat analysis to preserve the benefits of randomization.

🧷

Consider selection probability weighting to adjust for known selection factors.

🧷

Board principle: Once selection bias occurs, it cannot be fixed in analysis — prevention during study design is essential.

Preventing Information Bias

📍

Standardize all measurement protocols and train all data collectors identically.

📍

Use objective measures rather than subjective assessments when possible (lab values vs symptom ratings).

📍

Blind participants and investigators to exposure or outcome status when feasible.

📍

Implement quality control procedures: calibrate instruments, monitor inter-rater reliability, audit data collection.

📍

Key strategy: Make measurement error random rather than systematic — random error reduces precision but doesn't bias results.

Preventing Recall Bias

🔹

Use prospective designs (cohort studies) rather than retrospective designs when studying modifiable exposures.

🔹

Validate self-reported exposures with objective records: medical charts, pharmacy databases, employment records.

🔹

Use structured questionnaires with specific prompts rather than open-ended questions.

🔹

Interview cases and controls as close to the exposure period as possible.

🔹

Consider nested case-control studies within cohorts where exposure data was collected before disease onset.

🔹

Board pearl: Prospective cohort studies are immune to recall bias because exposure is assessed before outcome occurs.

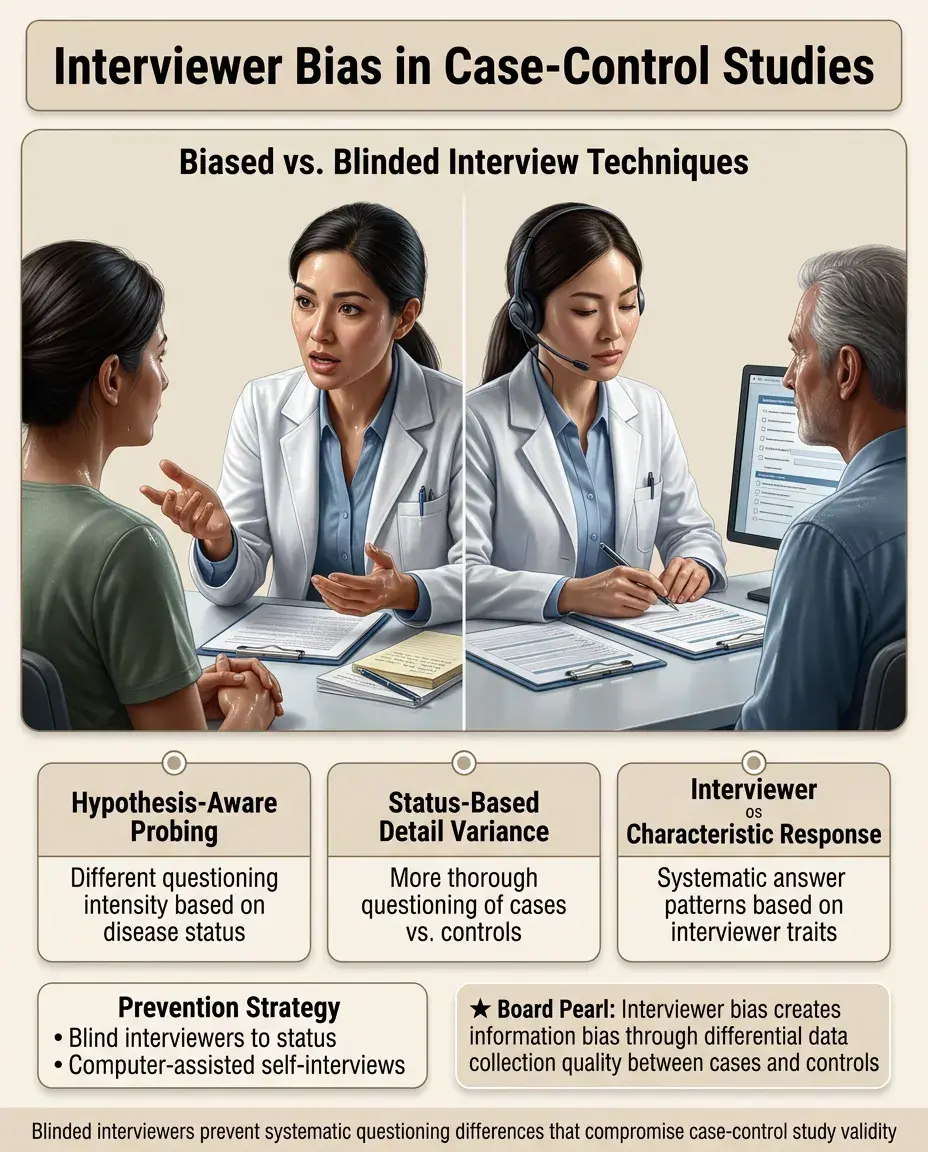

The Interviewer Effect on Bias

⭐

Interviewers who know the hypothesis may probe differently based on participant disease status → information bias.

⭐

Interviewers who know case/control status may ask more detailed questions of cases → differential data quality.

⭐

Participants may respond differently based on interviewer characteristics → systematic response patterns.

⭐

Prevention: Blind interviewers to participant status and study hypotheses; use computer-assisted self-interviews for sensitive topics.

Bias in Screening and Diagnostic Studies

✅

Lead-time bias: early detection makes survival appear longer without actually extending life.

✅

Length-time bias: screening preferentially detects slow-growing diseases with better prognosis.

✅

Verification bias: only positive screening tests get confirmed with gold standard → inflated sensitivity estimates.

✅

Spectrum bias: test performance varies across disease severity but is evaluated in a non-representative spectrum.

✅

Board distinction: These biases make screening programs appear more effective than they truly are.

Publication and Reporting Bias

🧠

Publication bias: positive results are more likely to be published than negative results → literature overestimates treatment effects.

🧠

Outcome reporting bias: researchers selectively report favorable outcomes while suppressing unfavorable ones.

🧠

Time-lag bias: positive results are published faster → early meta-analyses are overly optimistic.

🧠

Language bias: positive results more likely published in English → systematic reviews of English literature are biased.

🧠

Impact: These biases affect systematic reviews and meta-analyses, inflating overall effect estimates.

Distinguishing Bias from Confounding

⚡

Bias is error in how we measure the exposure-outcome relationship; confounding is a true association that muddles interpretation.

⚡

Bias cannot be fixed after data collection; confounding can be addressed through statistical adjustment if measured.

⚡

Bias threatens internal validity; confounding threatens our ability to make causal inferences.

⚡

Example: recall bias if cancer patients remember exposures better (measurement error) vs confounding if an exposure is truly associated with a cancer risk factor.

⚡

Board key: If the problem is "who was selected" or "how we measured," it's bias. If it's "what else is associated," it's confounding.

Bias Direction and Magnitude

📌

Selection and information bias can work in either direction — toward or away from the null hypothesis.

📌

Recall bias typically biases away from the null (creates or exaggerates associations).

📌

Non-differential misclassification typically biases toward the null (weakens associations).

📌

The magnitude depends on: prevalence of misclassification, strength of true association, and correlation between errors.

📌

Board approach: When a question asks about bias direction, consider whether the systematic error makes groups more similar (toward null) or more different (away from null).

Board Question Stem Patterns

📣

"Case-control study where cases were recruited from specialty clinic" → selection bias (Berkson's bias).

📣

"Mothers of children with autism recalled more prenatal infections" → recall bias.

📣

"Patients receiving new drug had weekly visits, controls had monthly visits" → detection/surveillance bias.

📣

"30% of treatment group lost to follow-up" → selection bias threatening validity.

📣

"Interviewers knew treatment allocation" → information/observer bias.

📣

"Self-reported diet questionnaire" → likely non-differential misclassification.

📣

"Only published studies included in meta-analysis" → publication bias.

One-Line Recap

🔸

Selection bias distorts who enters or remains in studies, information bias creates systematic measurement errors between groups, and recall bias leads to differential memory of exposures between cases and controls — all producing systematic errors that cannot be fixed by increasing sample size and must be prevented through careful study design including appropriate control selection, standardized measurements, blinding, and prospective data collection.

bottom of page